Laboratories generate massive volumes of data, yet many still manage it with paper records and scattered spreadsheets. Modern LIMS and ELN systems offer automation, security, and scalability that older methods cannot match. This article explores why making the transition is essential for any lab seeking higher productivity, accuracy, and compliance.

[Read More]

The Decoding the Digital Lab with CSols podcast marks its one-year anniversary, growing from a content initiative into a global hub for laboratory managers, IT directors, and scientific executives navigating digital transformations. Season two brings deeper investigations into supply chains, lab manager insights, and practical roadmaps for 2026.

[Read More]

Drug development depends on accurate, accessible, compliant lab data, yet too often it lives in siloed LIMS, ELN, and instrument databases. A unified lab informatics platform connects these systems into a single environment, enabling seamless data flow from instruments to analysis, reducing friction, supporting compliance, and driving reliable decisions.

[Read More]

This CSols white paper reveals expert-identified STARLIMS techniques that streamline data retrieval and system navigation. Built-in features cut routine task times from minutes to seconds for both scientists and administrators, with practical shortcuts that transform daily workflows and boost laboratory productivity.

[Read More]

A global biopharmaceutical company built an internal application to centralize lab asset tracking and streamline equipment purchase approvals. Kalleid led the change management, training, and UAT testing workstreams to drive rapid user adoption across global R&D sites and keep the application stable through every release.

[Read More]

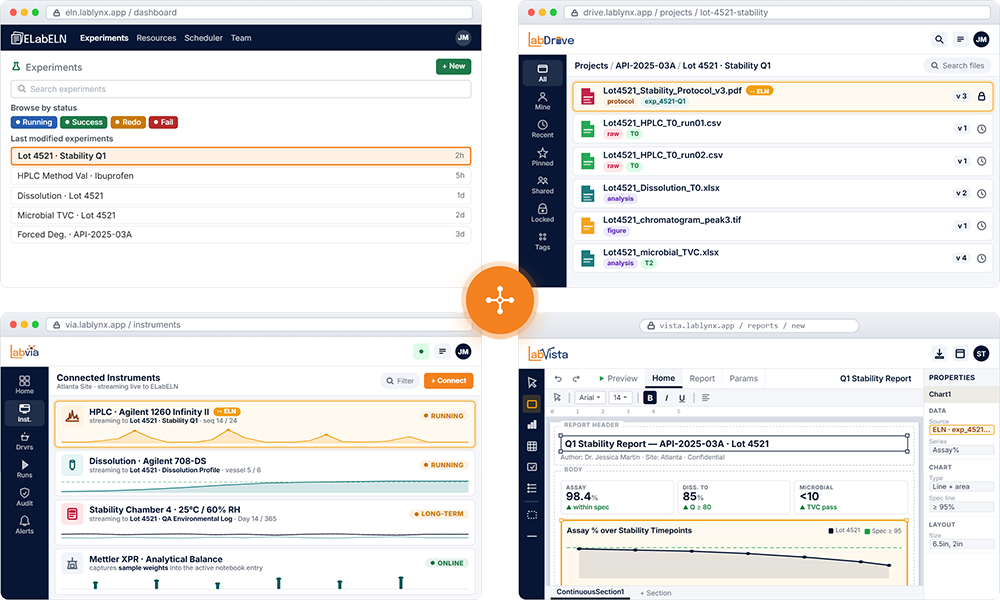

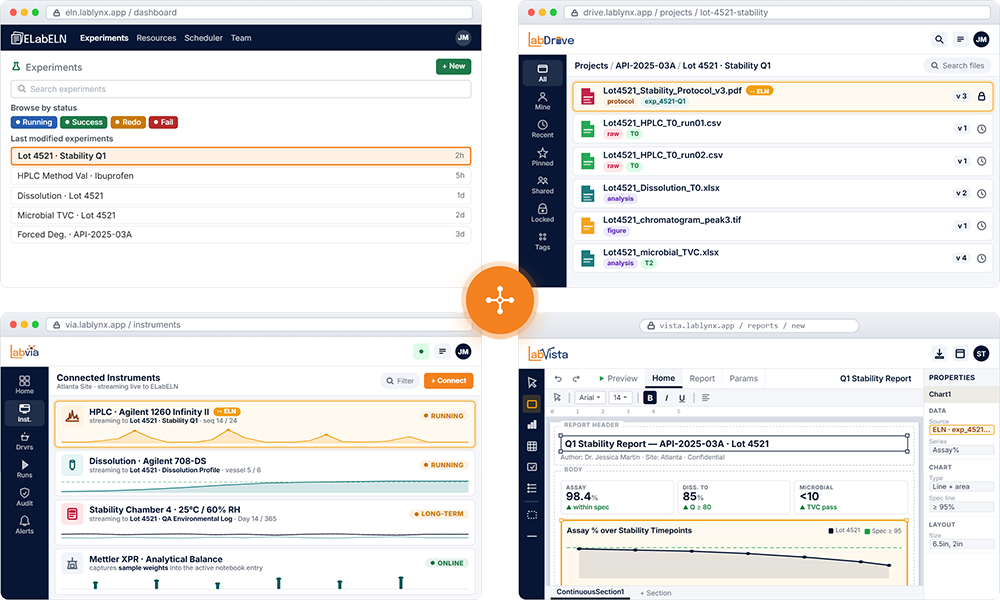

ELabELN Suite combines four integrated modules into one platform: the notebook, file layer, instrument layer, and analytics layer. Research data comes in four shapes, and a notebook alone cannot hold them all. The Suite captures the experiment, its data, the instruments, and the analytics as one connected record from day one.

[Read More]

AI in life sciences is only as strong as the data behind it. Fragmented instrument outputs, sample metadata, and experimental observations limit reproducibility and slow analytics. A unified LabVantage platform brings LIMS, ELN, and SDMS together to deliver structured, traceable data that is ready for advanced analytics and AI workflows.

[Read More]

In this CSols podcast episode, Anthony Lisi of Incyte joins the conversation on the dangers of vibe coding and vendor silos in modern lab automation. The discussion explores how the race to adopt AI without boundaries creates technical debt and security leaks, and what labs can do to protect their intellectual property while still moving fast.

[Read More]

Labbit has launched the Validation Assistant, an AI tool that automates qualification of configuration changes in regulated labs. Building on the 2025 Configuration Assistant, it generates test plans, executes qualification runs, and assembles documentation packages, helping teams deploy changes faster without sacrificing validation rigor.

[Read More]

Per-seat ELN pricing creates a quiet pressure to leave out the people whose work most needs to be captured: rotating students, occasional technicians, visiting collaborators. ELabELN looks at when per-seat math works, when it doesn't, and why an unlimited-user model fits research labs whose membership changes through the year.

[Read More]

AI investments are not delivering returns for many scientific organizations, and the root cause is rarely the technology itself. At a joint Sapio Sciences and Zifo event, R&D leaders agreed that AI readiness in the lab is a foundation problem, not a technology one, and explored how to build the data and governance to make it work.

[Read More]

Most labs without a LIMS run on spreadsheets and paper, until an assessor asks for the full sample history. Disconnected records, manual transcription, broken chain of custody, and corrective actions buried in email all weaken audit defensibility. Five common traceability failures and how a system of record closes the gaps.

[Read More]

The LIMS market is flooded with AI-powered claims, yet labs are still drowning in manual review and broken integrations. There's a real gap between AI that restructures workflows and AI that just adds a chat box. In regulated environments, the question is straightforward: does it actually reduce friction, or does it only look that way?

[Read More]

Biopharma is spending big on R&D informatics, but cutting-edge platforms alone do not guarantee results. Misaligned requirements still drive rework, scope creep, and stalled rollouts. A specialized Business Analyst bridges scientists and developers, turning workflow reality into actionable specs and protecting your software investment.

[Read More]

A buyer's guide for QA directors and lab managers evaluating LIMS in GMP-regulated environments. Eight leading platforms are compared against regulatory compliance, instrument connectivity, batch workflow support, configurability, and scalability. The right choice depends on where your lab sits across scale, speed, and compliance depth.

[Read More]

Canada's biopharma boom faces a coast-to-coast split: discovery happens in western wet labs, AI optimization in eastern dry labs. Proprietary instrument formats and disconnected ELN and LIMS systems turn that distance into a data wall. A vendor-neutral informatics bridge built on FAIR and ALCOA+ keeps the pipeline moving from bench to clinic.

[Read More]

An on-demand Astrix webinar with Seeding Labs CEO Melissa Wu explores how sustainability partnerships can become a strategic advantage for life sciences. The session covers integrating sustainability into strategy, the competitive edge of purpose-driven collaborations, and measurable social return on investment in 2026.

[Read More]

Discover the LabLynx ELabELN Solution, a cloud-based electronic lab notebook that goes beyond traditional ELNs. This presentation shows how ELabELN works with LabDrive, LabVia, and LabVista as a complete data management platform with professional reporting, instrument integration, and audit-ready compliance on an ELN-sized budget.

[Read More]

Most labs we talk to are tracking samples in spreadsheets and filing chain-of-custody on paper. That works until an assessor asks for the complete history of a sample and the answer is a scavenger hunt. This piece walks through five traceability failures we see repeatedly and what changes when the lab has a system of record.

[Read More]

CSols guided a state public health laboratory through a vendor-neutral LIMS selection across 20 departments and two facilities, tackling an aggressive funding deadline, a legacy system with critical gaps, and widespread stakeholder disengagement. The result was a formal RFP with custom demo scripts grounded in real workflows, not marketing claims.

[Read More]

Laboratories generate massive volumes of data, yet many still manage it with paper records and scattered spreadsheets. Modern LIMS and ELN systems offer automation, security, and scalability that older methods cannot match. This article explores why making the transition is essential for any lab seeking higher productivity, accuracy, and compliance.[Read More]

Laboratories generate massive volumes of data, yet many still manage it with paper records and scattered spreadsheets. Modern LIMS and ELN systems offer automation, security, and scalability that older methods cannot match. This article explores why making the transition is essential for any lab seeking higher productivity, accuracy, and compliance.[Read More]

The Decoding the Digital Lab with CSols podcast marks its one-year anniversary, growing from a content initiative into a global hub for laboratory managers, IT directors, and scientific executives navigating digital transformations. Season two brings deeper investigations into supply chains, lab manager insights, and practical roadmaps for 2026.[Read More]

The Decoding the Digital Lab with CSols podcast marks its one-year anniversary, growing from a content initiative into a global hub for laboratory managers, IT directors, and scientific executives navigating digital transformations. Season two brings deeper investigations into supply chains, lab manager insights, and practical roadmaps for 2026.[Read More]

Drug development depends on accurate, accessible, compliant lab data, yet too often it lives in siloed LIMS, ELN, and instrument databases. A unified lab informatics platform connects these systems into a single environment, enabling seamless data flow from instruments to analysis, reducing friction, supporting compliance, and driving reliable decisions.[Read More]

Drug development depends on accurate, accessible, compliant lab data, yet too often it lives in siloed LIMS, ELN, and instrument databases. A unified lab informatics platform connects these systems into a single environment, enabling seamless data flow from instruments to analysis, reducing friction, supporting compliance, and driving reliable decisions.[Read More]

This CSols white paper reveals expert-identified STARLIMS techniques that streamline data retrieval and system navigation. Built-in features cut routine task times from minutes to seconds for both scientists and administrators, with practical shortcuts that transform daily workflows and boost laboratory productivity.[Read More]

This CSols white paper reveals expert-identified STARLIMS techniques that streamline data retrieval and system navigation. Built-in features cut routine task times from minutes to seconds for both scientists and administrators, with practical shortcuts that transform daily workflows and boost laboratory productivity.[Read More]

A global biopharmaceutical company built an internal application to centralize lab asset tracking and streamline equipment purchase approvals. Kalleid led the change management, training, and UAT testing workstreams to drive rapid user adoption across global R&D sites and keep the application stable through every release.[Read More]

A global biopharmaceutical company built an internal application to centralize lab asset tracking and streamline equipment purchase approvals. Kalleid led the change management, training, and UAT testing workstreams to drive rapid user adoption across global R&D sites and keep the application stable through every release.[Read More]

ELabELN Suite combines four integrated modules into one platform: the notebook, file layer, instrument layer, and analytics layer. Research data comes in four shapes, and a notebook alone cannot hold them all. The Suite captures the experiment, its data, the instruments, and the analytics as one connected record from day one.[Read More]

ELabELN Suite combines four integrated modules into one platform: the notebook, file layer, instrument layer, and analytics layer. Research data comes in four shapes, and a notebook alone cannot hold them all. The Suite captures the experiment, its data, the instruments, and the analytics as one connected record from day one.[Read More]

AI in life sciences is only as strong as the data behind it. Fragmented instrument outputs, sample metadata, and experimental observations limit reproducibility and slow analytics. A unified LabVantage platform brings LIMS, ELN, and SDMS together to deliver structured, traceable data that is ready for advanced analytics and AI workflows.[Read More]

AI in life sciences is only as strong as the data behind it. Fragmented instrument outputs, sample metadata, and experimental observations limit reproducibility and slow analytics. A unified LabVantage platform brings LIMS, ELN, and SDMS together to deliver structured, traceable data that is ready for advanced analytics and AI workflows.[Read More]

In this CSols podcast episode, Anthony Lisi of Incyte joins the conversation on the dangers of vibe coding and vendor silos in modern lab automation. The discussion explores how the race to adopt AI without boundaries creates technical debt and security leaks, and what labs can do to protect their intellectual property while still moving fast.[Read More]

In this CSols podcast episode, Anthony Lisi of Incyte joins the conversation on the dangers of vibe coding and vendor silos in modern lab automation. The discussion explores how the race to adopt AI without boundaries creates technical debt and security leaks, and what labs can do to protect their intellectual property while still moving fast.[Read More]

Labbit has launched the Validation Assistant, an AI tool that automates qualification of configuration changes in regulated labs. Building on the 2025 Configuration Assistant, it generates test plans, executes qualification runs, and assembles documentation packages, helping teams deploy changes faster without sacrificing validation rigor.[Read More]

Labbit has launched the Validation Assistant, an AI tool that automates qualification of configuration changes in regulated labs. Building on the 2025 Configuration Assistant, it generates test plans, executes qualification runs, and assembles documentation packages, helping teams deploy changes faster without sacrificing validation rigor.[Read More]

Per-seat ELN pricing creates a quiet pressure to leave out the people whose work most needs to be captured: rotating students, occasional technicians, visiting collaborators. ELabELN looks at when per-seat math works, when it doesn't, and why an unlimited-user model fits research labs whose membership changes through the year.[Read More]

Per-seat ELN pricing creates a quiet pressure to leave out the people whose work most needs to be captured: rotating students, occasional technicians, visiting collaborators. ELabELN looks at when per-seat math works, when it doesn't, and why an unlimited-user model fits research labs whose membership changes through the year.[Read More]

AI investments are not delivering returns for many scientific organizations, and the root cause is rarely the technology itself. At a joint Sapio Sciences and Zifo event, R&D leaders agreed that AI readiness in the lab is a foundation problem, not a technology one, and explored how to build the data and governance to make it work.[Read More]

AI investments are not delivering returns for many scientific organizations, and the root cause is rarely the technology itself. At a joint Sapio Sciences and Zifo event, R&D leaders agreed that AI readiness in the lab is a foundation problem, not a technology one, and explored how to build the data and governance to make it work.[Read More]

Most labs without a LIMS run on spreadsheets and paper, until an assessor asks for the full sample history. Disconnected records, manual transcription, broken chain of custody, and corrective actions buried in email all weaken audit defensibility. Five common traceability failures and how a system of record closes the gaps.[Read More]

Most labs without a LIMS run on spreadsheets and paper, until an assessor asks for the full sample history. Disconnected records, manual transcription, broken chain of custody, and corrective actions buried in email all weaken audit defensibility. Five common traceability failures and how a system of record closes the gaps.[Read More]

The LIMS market is flooded with AI-powered claims, yet labs are still drowning in manual review and broken integrations. There's a real gap between AI that restructures workflows and AI that just adds a chat box. In regulated environments, the question is straightforward: does it actually reduce friction, or does it only look that way?[Read More]

The LIMS market is flooded with AI-powered claims, yet labs are still drowning in manual review and broken integrations. There's a real gap between AI that restructures workflows and AI that just adds a chat box. In regulated environments, the question is straightforward: does it actually reduce friction, or does it only look that way?[Read More]

Biopharma is spending big on R&D informatics, but cutting-edge platforms alone do not guarantee results. Misaligned requirements still drive rework, scope creep, and stalled rollouts. A specialized Business Analyst bridges scientists and developers, turning workflow reality into actionable specs and protecting your software investment.[Read More]

Biopharma is spending big on R&D informatics, but cutting-edge platforms alone do not guarantee results. Misaligned requirements still drive rework, scope creep, and stalled rollouts. A specialized Business Analyst bridges scientists and developers, turning workflow reality into actionable specs and protecting your software investment.[Read More]

A buyer's guide for QA directors and lab managers evaluating LIMS in GMP-regulated environments. Eight leading platforms are compared against regulatory compliance, instrument connectivity, batch workflow support, configurability, and scalability. The right choice depends on where your lab sits across scale, speed, and compliance depth.[Read More]

A buyer's guide for QA directors and lab managers evaluating LIMS in GMP-regulated environments. Eight leading platforms are compared against regulatory compliance, instrument connectivity, batch workflow support, configurability, and scalability. The right choice depends on where your lab sits across scale, speed, and compliance depth.[Read More]

Canada's biopharma boom faces a coast-to-coast split: discovery happens in western wet labs, AI optimization in eastern dry labs. Proprietary instrument formats and disconnected ELN and LIMS systems turn that distance into a data wall. A vendor-neutral informatics bridge built on FAIR and ALCOA+ keeps the pipeline moving from bench to clinic.[Read More]

Canada's biopharma boom faces a coast-to-coast split: discovery happens in western wet labs, AI optimization in eastern dry labs. Proprietary instrument formats and disconnected ELN and LIMS systems turn that distance into a data wall. A vendor-neutral informatics bridge built on FAIR and ALCOA+ keeps the pipeline moving from bench to clinic.[Read More]

An on-demand Astrix webinar with Seeding Labs CEO Melissa Wu explores how sustainability partnerships can become a strategic advantage for life sciences. The session covers integrating sustainability into strategy, the competitive edge of purpose-driven collaborations, and measurable social return on investment in 2026.[Read More]

An on-demand Astrix webinar with Seeding Labs CEO Melissa Wu explores how sustainability partnerships can become a strategic advantage for life sciences. The session covers integrating sustainability into strategy, the competitive edge of purpose-driven collaborations, and measurable social return on investment in 2026.[Read More]

Discover the LabLynx ELabELN Solution, a cloud-based electronic lab notebook that goes beyond traditional ELNs. This presentation shows how ELabELN works with LabDrive, LabVia, and LabVista as a complete data management platform with professional reporting, instrument integration, and audit-ready compliance on an ELN-sized budget.[Read More]

Discover the LabLynx ELabELN Solution, a cloud-based electronic lab notebook that goes beyond traditional ELNs. This presentation shows how ELabELN works with LabDrive, LabVia, and LabVista as a complete data management platform with professional reporting, instrument integration, and audit-ready compliance on an ELN-sized budget.[Read More]

Most labs we talk to are tracking samples in spreadsheets and filing chain-of-custody on paper. That works until an assessor asks for the complete history of a sample and the answer is a scavenger hunt. This piece walks through five traceability failures we see repeatedly and what changes when the lab has a system of record.[Read More]

Most labs we talk to are tracking samples in spreadsheets and filing chain-of-custody on paper. That works until an assessor asks for the complete history of a sample and the answer is a scavenger hunt. This piece walks through five traceability failures we see repeatedly and what changes when the lab has a system of record.[Read More]

CSols guided a state public health laboratory through a vendor-neutral LIMS selection across 20 departments and two facilities, tackling an aggressive funding deadline, a legacy system with critical gaps, and widespread stakeholder disengagement. The result was a formal RFP with custom demo scripts grounded in real workflows, not marketing claims.[Read More]

CSols guided a state public health laboratory through a vendor-neutral LIMS selection across 20 departments and two facilities, tackling an aggressive funding deadline, a legacy system with critical gaps, and widespread stakeholder disengagement. The result was a formal RFP with custom demo scripts grounded in real workflows, not marketing claims.[Read More]